Intelligence, expertise, and experience are widely associated with sound decision-making. In business, academia, and technology, highly capable individuals are often expected to produce more accurate judgments than others. Yet history repeatedly shows that even the most intelligent professionals can make confident mistakes. In many cases, these errors occur not because of a lack of knowledge, but because certain cognitive and structural factors make highly skilled individuals particularly vulnerable to overconfidence.

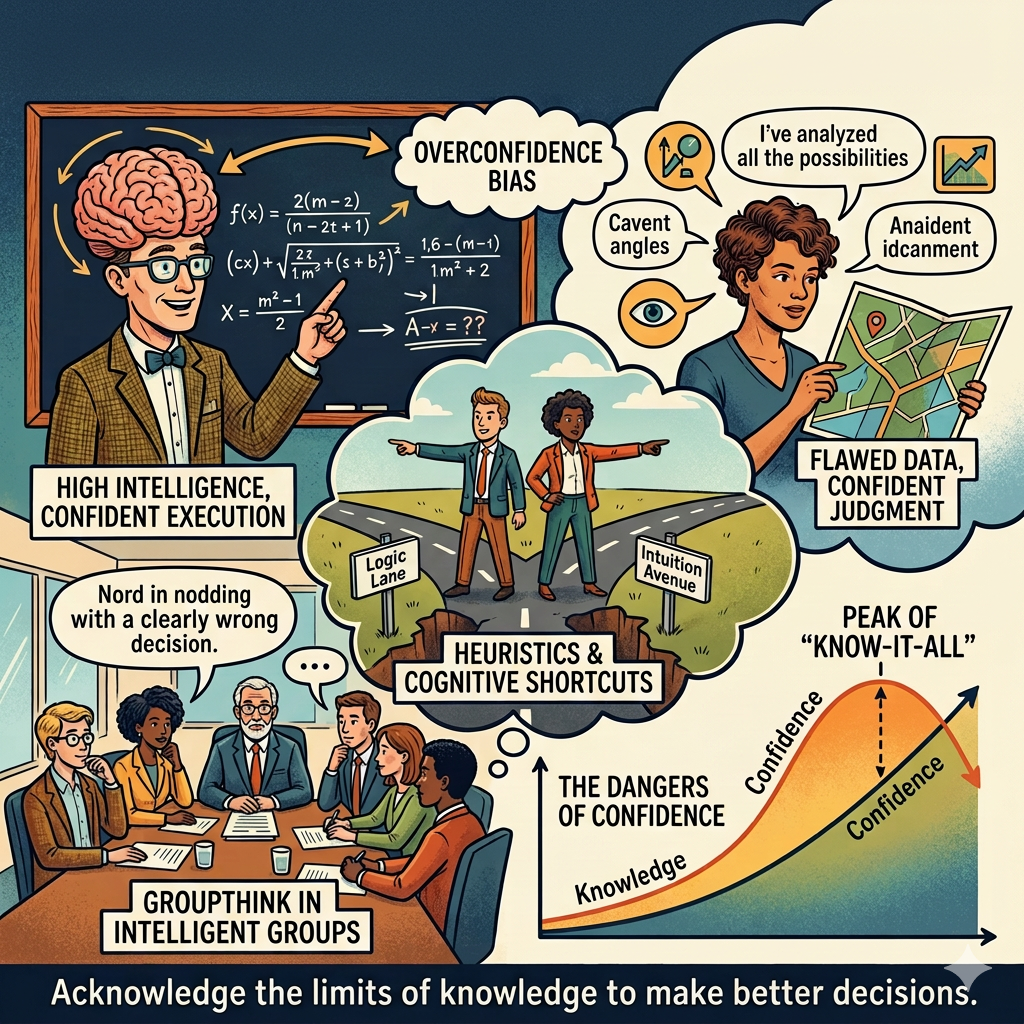

The first reason lies in the relationship between expertise and confidence. Expertise allows individuals to recognize patterns quickly and form judgments based on experience. This ability is valuable in many contexts because it speeds up decision-making. However, when experts rely too heavily on familiar patterns, they may assume that current situations resemble past experiences even when important differences exist. This can lead to confident but incorrect conclusions.

Another factor is confirmation bias, the tendency to seek information that supports existing beliefs while overlooking evidence that contradicts them. Highly knowledgeable individuals often possess strong theoretical frameworks that help them interpret complex problems. While these frameworks are useful, they can also filter incoming information in ways that reinforce prior assumptions. As a result, experts may unintentionally interpret ambiguous evidence as validation of their existing views.

Professional reputation can also influence decision behavior. Individuals recognized for their expertise may feel pressure to maintain the appearance of certainty. Admitting uncertainty or revising an earlier judgment can feel risky when others expect confident answers. This social pressure may encourage experts to defend their original conclusions even when new information suggests they should reconsider.

The complexity of modern decision environments further contributes to confident mistakes. In fields such as finance, marketing analytics, engineering, and policy design, professionals must interpret large volumes of data. Analytical tools and models help simplify this information, but they also introduce assumptions. When experts trust these models without fully examining their limitations, they may become overly confident in the results.

Another contributor is overfitting of experience. Experts often develop mental models based on specific industries, time periods, or market conditions. These models work well when underlying conditions remain stable. However, when environments change—through technological disruption, economic shifts, or evolving consumer behavior—previously reliable assumptions may no longer apply. Expertise based on past conditions can then lead to confident misinterpretation of new realities.

Smart individuals may also underestimate the role of randomness in outcomes. When analyzing complex systems, it is tempting to attribute results to deliberate strategies or identifiable causes. However, many business outcomes involve elements of chance. When successful outcomes are attributed entirely to skill rather than partially to favorable conditions, confidence in decision frameworks can become exaggerated.

Another cognitive dynamic involves narrative construction. Intelligent individuals are often skilled at creating coherent explanations for complex events. While this ability improves communication and reasoning, it can also lead to oversimplified interpretations. When experts construct persuasive narratives about why something happened, those narratives may appear convincing even if key variables were overlooked.

Group dynamics can reinforce confident mistakes as well. When teams consist of highly capable individuals with similar training or professional backgrounds, they may share similar assumptions. This homogeneity can reduce critical questioning and lead to collective overconfidence. Without diverse perspectives, teams may fail to challenge flawed reasoning.

Data interpretation provides another example. Experts trained in analytics may have strong confidence in statistical outputs. Yet statistical results depend heavily on data quality, model design, and contextual interpretation. When professionals rely on analytical outputs without questioning underlying assumptions, they may place excessive confidence in results that contain hidden limitations.

The structure of incentives in organizations can also play a role. In many professional environments, individuals are rewarded for decisive action and confident leadership. Expressing doubt or exploring alternative interpretations may appear less decisive. Over time, this incentive structure can encourage confident assertions even when uncertainty remains significant.

Importantly, intelligence itself does not eliminate cognitive biases. In some cases, it may amplify them. Highly analytical individuals can generate sophisticated arguments that support their existing views, making it easier to rationalize incorrect conclusions. The ability to construct complex reasoning does not guarantee that the reasoning is correct.

Recognizing these dynamics does not diminish the value of expertise. On the contrary, expertise remains essential for interpreting complex systems and guiding strategic decisions. The key challenge is ensuring that expertise is accompanied by intellectual humility and structured decision processes.

One effective safeguard is encouraging structured debate within teams. When organizations create environments where questioning assumptions is welcomed, confident errors are more likely to be detected early. Diverse perspectives help expose blind spots that individuals may overlook.

Another important practice is separating data analysis from interpretation. Analysts can present findings while decision-makers evaluate strategic implications independently. This separation encourages critical examination of conclusions rather than automatic acceptance.

Scenario analysis can also reduce overconfidence. By exploring multiple possible outcomes rather than relying on a single forecast, decision-makers acknowledge uncertainty and prepare for different conditions. This approach reduces the risk of treating one interpretation as definitive.

Continuous learning is equally important. Experts who regularly revisit their assumptions and update their models based on new evidence are less likely to remain anchored to outdated frameworks. Intellectual flexibility allows expertise to evolve alongside changing environments.

Organizations can further reduce confident mistakes by documenting decision processes. When teams record assumptions, data sources, and reasoning behind major decisions, they create opportunities for later review. This transparency helps identify where errors originated and improves future judgment.

Ultimately, the presence of confident mistakes among intelligent individuals reflects the complexity of decision-making rather than a failure of intelligence. Complex systems rarely produce perfectly predictable outcomes. Even well-informed decisions can lead to unexpected results.

The key insight is that intelligence and expertise should be paired with humility, critical thinking, and collaborative evaluation. When organizations recognize that even smart people can be confidently wrong, they become more open to questioning assumptions and refining their strategies.

In dynamic environments, the most reliable advantage is not simply intelligence but the willingness to challenge one’s own conclusions. By combining expertise with disciplined skepticism, individuals and organizations can reduce the likelihood of confident mistakes while improving the quality of their decisions.